AI-referred traffic jumped 527% year-over-year between 2024 and 2025 and most brands still aren’t optimizing for it

Search is no longer limited to blue links. More people are turning to LLM platforms like ChatGPT to get direct answers instead of clicking through traditional search results.

Instead of sending users to websites, these systems summarize information and cite sources inside the response.

This shift has created a new optimization layer: Generative Engine Optimization (GEO), the practice of making your content discoverable and citable by generative AI models.

If your brand is not showing up in AI-generated answers, you may already be losing visibility.

In this guide, we’ll break down what GEO is, why it matters, how it works and its optimization strategies.

What Is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) refers to the process of improving your visibility on large language model (LLM) platforms such as ChatGPT, Perplexity, Google AI Overviews, Claude, etc.

Instead of ranking web pages in traditional search results, GEO focuses on helping these systems surface your brand directly within AI-generated answers.

GEO involves optimizing both content and technical signals so AI systems can crawl, parse, and cite your website accurately.

This includes fast-loading pages, clear site structure, strong topical authority, reliable citations, and consistent brand signals that increase the likelihood of your content being referenced by generative AI models

Want to see how your site performs in AI search? Talk to our GEO Team.

Why Is Generative Engine Optimization Important?

Three reasons GEO should be a priority for growth-focused brands:

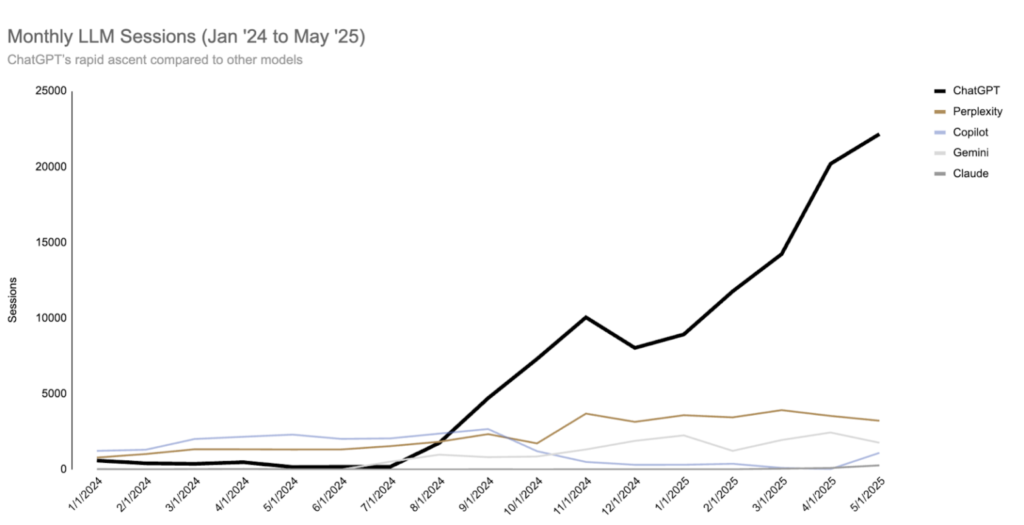

- Rising AI search usage: People are increasingly turning to AI-powered answer systems like ChatGPT, Perplexity, Gemini, and Google AI Overviews for information. In fact, total AI-referred sessions across a sample of sites jumped 527% year-over-year between early 2024 and mid-2025, showing that generative platforms are quickly becoming a key part of how people find information.

Source: Search Engine Land

- Dominate the new channel: Generative AI platforms represent a new visibility channel. If your content is not optimized for AI engines, competitors who appear in answers will own a larger share of voice, reducing your brand’s recognition in the places where audiences increasingly start their search and decision-making process.

- Get more sales & leads: As users adopt AI-first search behaviors, brands visible on LLM platforms can capture high-intent customers. Being included in AI-generated answers helps maintain and grow future revenue, whereas a lack of visibility can directly impact lead flow and sales opportunities as search evolves.

How Generative Engine Optimization Works

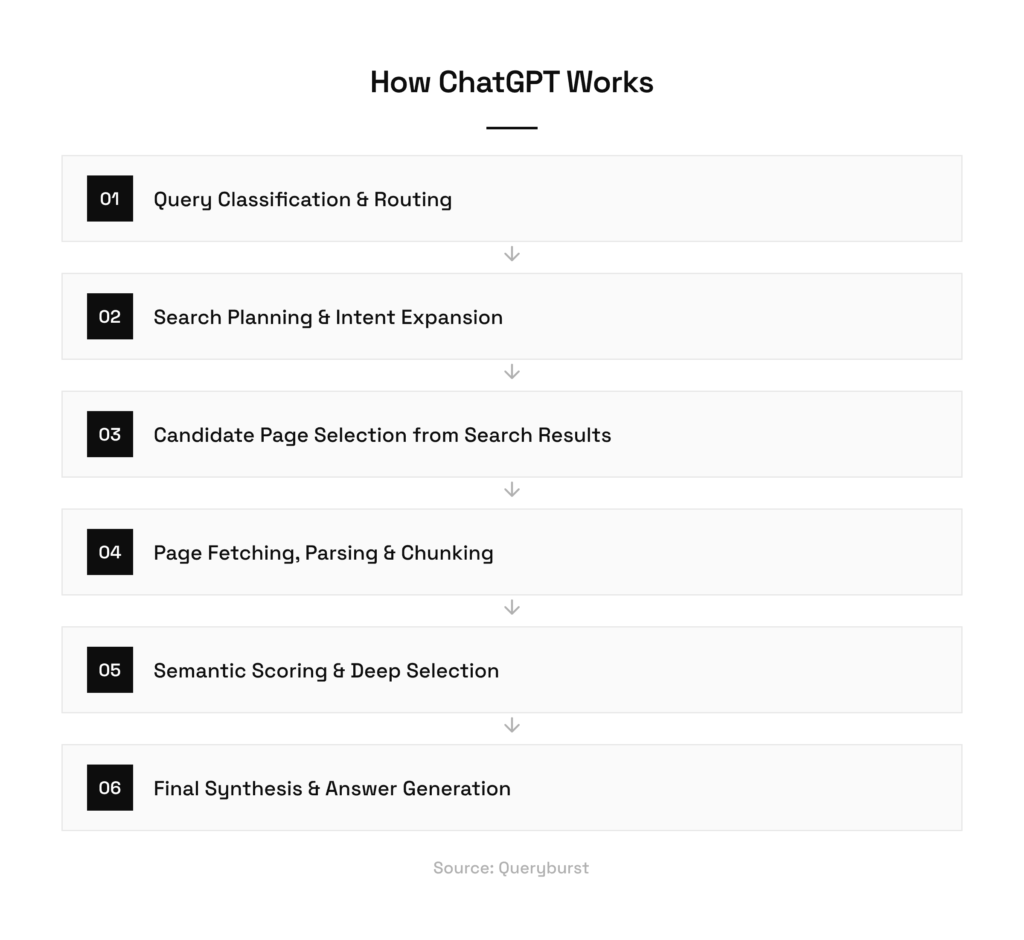

Generative engines do not publicly disclose their full retrieval and ranking logic. However, here’s the approach shared by David Mcsweeney on how ChatGPT Works.

Step 1: Query Classification and Routing

When a user submits a query, a classification model first evaluates it to decide whether web search is required.

Simple factual questions may be answered directly from the model’s training data, while more complex or ambiguous queries are routed into a search and retrieval pipeline. This initial decision determines whether external content is even considered, making it the first gate GEO must pass.

Step 2: Search Planning and Intent Expansion

If a search is needed, a secondary reasoning model plans what to look for.

It generates both traditional keyword-style queries and longer semantic queries (also referred to as Query Fan Out) designed to capture user intent. These semantic queries often include synonyms and related terms, allowing the system to retrieve content that aligns closely with the meaning of the question rather than exact phrasing.

Step 3: Candidate Page Selection from Search Results Based on Metadata

The system retrieves a broad set of pages from search results and performs an initial filtering pass.

Using metadata such as titles, descriptions, URLs, and trust signals, it narrows dozens of results down to a smaller group of “candidate” pages based on metadata.

This makes the metadata of the page extremely important.

Step 4: Page Fetching, Parsing, and Chunking

Selected pages are fetched under strict time limits (<2 sec). Fast-loading pages are fully processed, while slow pages are dropped.

The content is then parsed and split into small chunks to prepare it for semantic analysis. This makes site speed, clean HTML, and accessible content critical for GEO success.

Step 5: Semantic Scoring and Deep Selection

All content chunks are embedded and scored against the user’s intent using vector similarity.

The highest-scoring chunks from each page are reviewed, and only a few pages are selected for deep reading. These pages provide the core context used to generate the final answer, while others may appear only as secondary citations.

To qualify in this step, it’s important that the page has relevant entities so that it passes the vector similarity test.

Step 6: Final Synthesis and Answer Generation

The final generation model combines selected page content with trusted internal sources, such as popular news providers, forums, or cached summaries.

Using this mixed context, it produces a single synthesized response.

Brands that consistently pass every stage of this pipeline are far more likely to be cited in AI-generated answers.

Generative Engine Optimization Techniques

Below is a list of techniques and activities to follow to increase your likelihood of getting mentioned in LLM’s

Technical Optimization

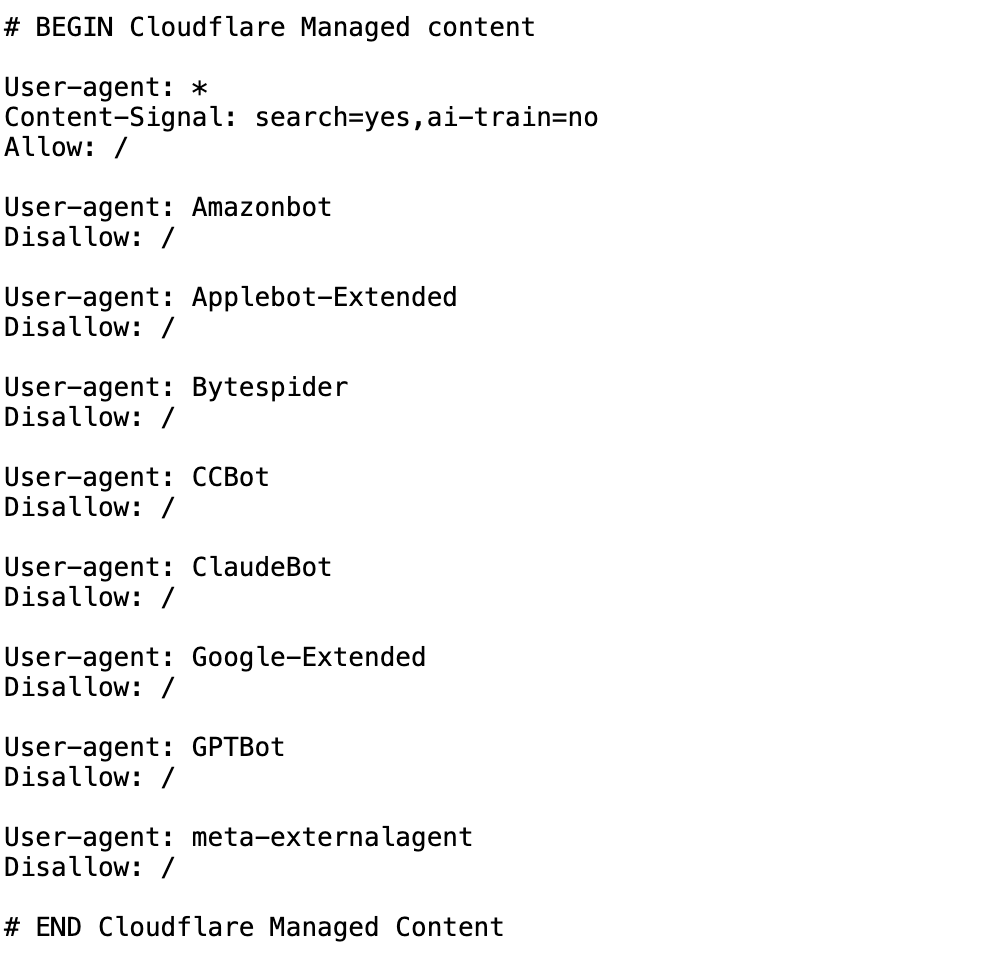

1. Crawlability:

Pages must be accessible without login barriers, IP blocking, or restrictive robots.txt rules to LLM bots.

Review your robots.txt file to check if you are unknowingly blocking any of the LLM bots.

Here’s an example robots.txt from a client’s website, which, upon audit, we found that they were blocking ChatGPT, ClaudeBot and a few more LLM bots.

(Client’s website accidentally blocking LLM Bots)

2. Avoid Heavy Client-Side JavaScript:

As per the study by Vercel in 2024, Most AI crawlers (OpenAI, Anthropic, Meta, ByteDance) cannot render JavaScript.

If you want your website to be visible in LLMs, have Server Side Rendering (SSR) or Static Side Generation (SSG). Excessive client-side rendering increases fetch failures and content truncation during AI retrieval.

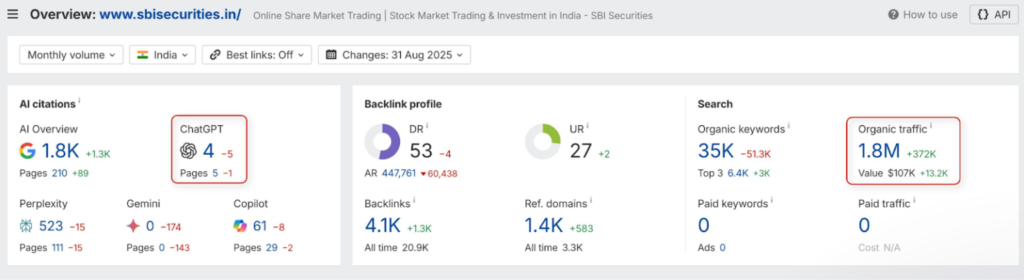

Below is an example of an Enterprise Company in the finance space with 1.8 Million Monthly Traffic. Because their website was built on CSR (Client Side Rendering), most of their pages were invisible to LLM crawlers like ChatGPT. When we performed the audit, out of 700 pages, only 4 pages were cited in ChatGPT.

3. Structured Data:

Using structured data such as Organization, Article, FAQ, and Product schema helps AI models understand entities, relationships, and context.

Microsoft Bing, in its recent guide emphasized the importance of Structured Data and how it helps LLMs and Agentic Browsers to retrieve the data.

4. Faster Loading:

Aim for under 2 seconds TTFB and full HTML delivery. AI systems apply aggressive timeouts during page retrieval. As mentioned by David Mcsweeney, sites loading within 2 secs are more likely to be included in the citations.

5. Monitor the Presence on Bing Alongside Google:

Since ChatGPT uses the Bing Search API to retrieve web results for many queries, maintaining strong visibility in Bing is essential for improving citation chances in AI-generated answers.

Content Optimization

1. Start with a Summary, Front-Load the Important Points:

Research from Growth Memo’s analysis found that 44.2% of all citations come from the first 30% of a page’s text, showing that generative engines heavily prioritize introductions. AI models tend to extract the “who, what, and where” from the top of the page, meaning placing key insights in the opening section significantly increases the likelihood of being cited.

2. Entry First Content:

Clearly define the primary brand, product, or concept early, so AI systems understand what the page is about. We use Surfer SEO to ensure all our content has relevant entities.

3. Structure Your Writing:

Use short paragraphs, bullets, and clear subheadings to make content easier to scan and interpret.

Since LLMs break pages into smaller content chunks before scoring relevance, well-structured formatting increases the chances that the right section gets extracted and cited.

4. Support with Facts and Quotes:

Include verified statistics, expert quotes, and sourced claims to increase trust and citation likelihood.

5. Create Topical Cluster:

Build interconnected articles around a core topic and link them strategically to show subject depth. This helps AI systems understand the relationship between pages and strengthens your authority within that topic area.

6. Keep Content Fresh:

Research from Ahrefs shows AI systems tend to cite significantly fresher content, i.e. a 12-month refresh window, while some industry perspectives, such as Hashmeta, define freshness as updates within 90 days.

Regular updates (90 days – 12 months) help sustain rankings, credibility, and visibility across both traditional and AI-driven search.

7. LLM-Friendly Content Formats:

Publish comparisons, reviews, listicles, and direct answer articles aligned with conversational search intent.

Citation Optimization

1. Listicle Style Backlinks:

Across all citations analyzed, “best X” blog lists represented 43.8% of all page types cited in generative search results. Appearing in comparison articles and best of lists increases the likelihood of being cited by generative engines.

2. Reddit & Quora:

Semrush’s AI search research shows that Quora and Reddit are among the most frequently cited sources in AI-generated summaries, with Quora ranking first and Reddit second in citation volume. Brand mentions and helpful contributions on these platforms increase the likelihood of your brand being referenced by AI systems within generative responses.

Our Reddit marketing services help brands build authentic, citation-worthy presence on Reddit.

3. Build More Brand Citations:

Pursue guest posts on industry publications, submit to niche directories, seek podcast appearances, and pitch expert commentary. Each mention creates a citation signal AI systems pick up

For a deeper breakdown of LLM ranking tactics with real-world examples, read our detailed guide on how to rank in large language models.

Conclusion

Generative Engine Optimization is still evolving, but the direction is clear. As users shift from traditional search results to AI-generated answers, being visible on generative engines is quickly becoming a requirement for long-term business growth.

At Growffic, we help brands adapt to this shift with data-driven generative engine optimization services designed around how generative engines actually work. If AI search visibility matters to your business, now is the right time to invest and stay ahead.